Entropy-Gated AI Framework¶

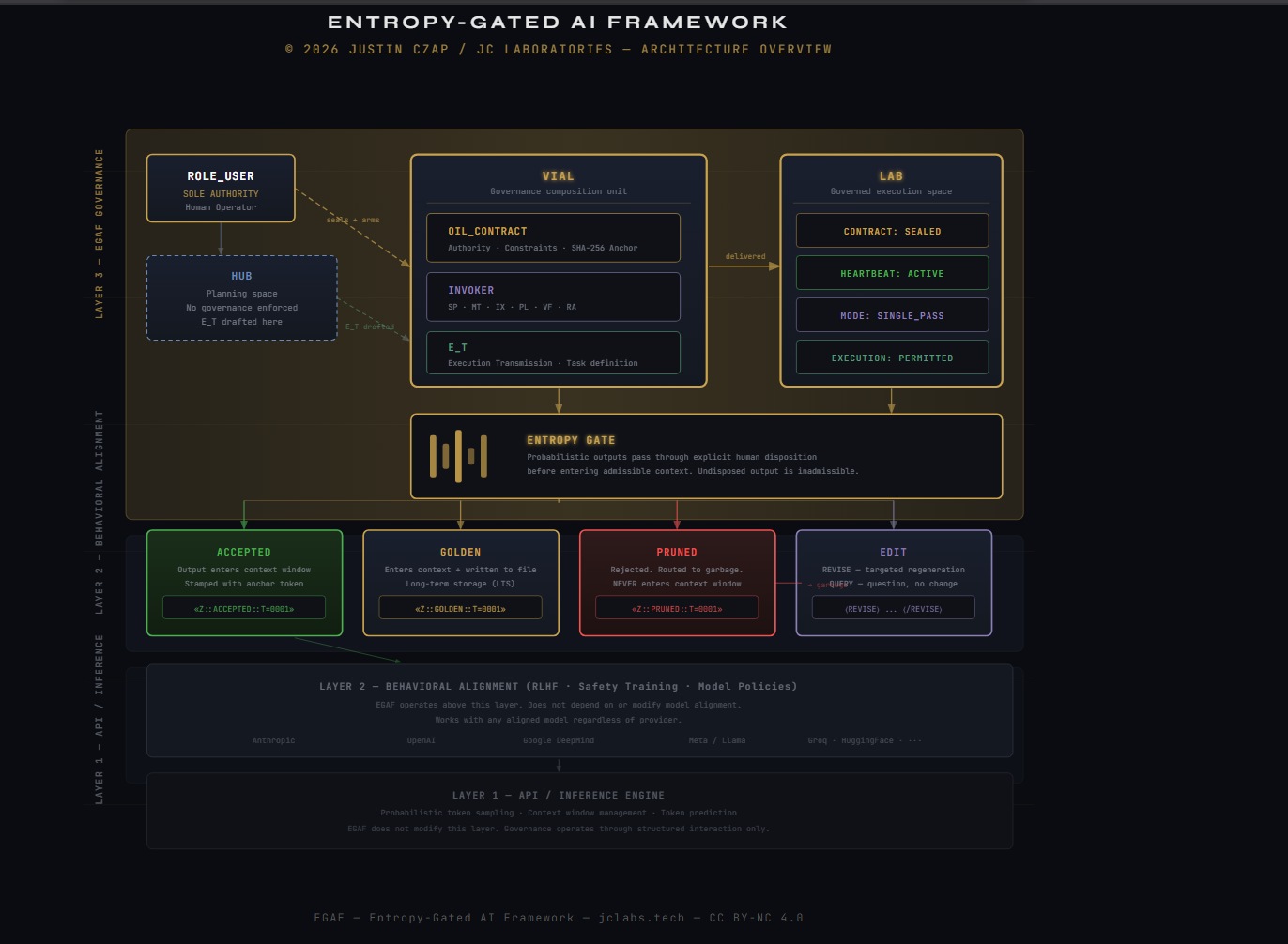

The Entropy-Gated AI Framework is a human-directed governance and execution architecture for using probabilistic language models in workflows where correctness, repeatability, and auditability are operational requirements rather than aspirational goals.

The framework introduces explicit control mechanisms that regulate when and how Probabilistic Reasoning is permitted to influence outputs or accepted state. These controls are applied at the interaction level — above the model, above the API, and independent of the provider and are designed to constrain Interaction-Level Entropy across long-running sessions.

Rather than attempting to make a probabilistic system deterministic, the framework enables disciplined, Quasi-Deterministic behavior through explicit Governance, bounded Execution, and human-directed Acceptance. This approach aligns with established engineering practice in systems where uncertainty cannot be eliminated but must be controlled, documented, and audited.

The framework is model-agnostic and interface-agnostic. It does not depend on specialized runtimes, plugins, or SDKs. It operates entirely through structured interaction, authoritative Markdown governed by Structural Authority, and explicit operator decisions enforced by the ROLE_USER. As described by NIST, effective governance in complex systems depends on clearly defined roles, controlled change, and repeatable processes rather than automation alone (National Institute of Standards and Technology, 2011; National Institute of Standards and Technology, 2020).

Architecture overview — © 2026 Justin Czap / JC Laboratories · CC BY-NC 4.0

Why this framework exists¶

Large language models are inherently probabilistic systems. Their outputs are generated by sampling from probability distributions rather than by executing deterministic programs. This characteristic enables flexible reasoning and language generation, but it also introduces variability, inconsistency, and uncertainty that compound over time when interactions are not governed.

In practice, long-running or high-stakes AI interactions frequently degrade due to uncontrolled accumulation of uncertainty. Common failure patterns include gradual conversational drift, hallucinated or fabricated details, implicit assumptions becoming accepted facts through uncontrolled State Promotion, inconsistent results across retries, and an inability to reproduce or audit prior outcomes. These behaviors are well documented in both academic literature and industry analysis of AI-assisted systems (Bajpai, 2024; Thiyagarajan et al., 2024; McKinsey & Company, 2024).

These failures are not defects in the underlying models. They are a consequence of using probabilistic systems without explicit interaction Governance. As Shannon’s foundational work on information theory makes clear, uncertainty is a fundamental property of probabilistic communication systems, not an anomaly to be ignored (Shannon, 1948). When uncertainty is allowed to propagate implicitly across interactions, it becomes increasingly difficult to distinguish signal from noise or to determine which outputs should be trusted.

The Entropy-Gated AI Framework exists to address this gap. It introduces a structured governance layer that makes uncertainty visible, bounded, and controllable. By requiring explicit execution boundaries through Execution Processes, human-directed Authority, and disciplined handling of Pruning and Pruning, the framework prevents silent entropy accumulation and preserves the ability to review, reproduce, and justify outcomes after the fact.

This design philosophy aligns with established principles in configuration management, security governance, and audit-focused system design, where uncontrolled change is treated as a systemic risk rather than a usability inconvenience (National Institute of Standards and Technology, 2011; Center for Internet Security, 2024). The framework applies these principles to AI interaction itself, treating the conversation as an operational system governed with the same rigor as any other critical workflow.

What problem this framework solves¶

Most contemporary uses of large language models focus on prompts, tools, or automation outcomes. Far less attention is paid to how Probabilistic Reasoning unfolds across time, how uncertainty accumulates across turns, or how outputs transition from tentative suggestions into accepted state. This absence of interaction Governance is the primary problem the framework addresses.

In ungoverned interactions, probabilistic outputs are frequently reused, summarized, or referenced without explicit Acceptance. Over multiple turns, this behavior causes Interaction-Level Entropy to increase silently. Pruned reasoning remains in context, retries build upon contaminated assumptions, and ambiguity is resolved implicitly rather than explicitly. The result is loss of repeatability, degraded correctness, and an inability to audit or reproduce decisions.

This problem mirrors well-understood failure modes in configuration management and systems governance. NIST identifies uncontrolled change and undocumented state transitions as primary drivers of system unreliability and audit failure (National Institute of Standards and Technology, 2011). Similar risks appear in AI-assisted workflows when outputs are allowed to influence subsequent reasoning without formal control boundaries.

The Entropy-Gated AI Framework solves this by introducing a governance layer that regulates how uncertainty propagates across interactions. It enforces explicit execution boundaries via declared Execution Processes, requires human-directed State Promotion, and makes Pruning and Pruning first-class operational actions. By doing so, it enables retries that are clean, comparable, and auditable rather than cumulative and opaque.

The result is not determinism, which is neither achievable nor desirable in probabilistic systems, but controlled correctness. Outcomes become reviewable, reproducible under equivalent conditions, and suitable for professional and enterprise contexts where accountability matters (Thiyagarajan et al., 2024; McKinsey & Company, 2024).

What this framework is¶

The Entropy-Gated AI Framework is a governance and interaction specification that defines how humans and probabilistic language models operate together when correctness, repeatability, and auditability are required.

At its core, the framework is a protocol for human-directed AI usage. It specifies roles, Authority boundaries, Execution lifecycles, and Entropy Gating mechanisms without prescribing a specific model, provider, interface, or deployment environment. This model-agnostic design reflects the principle that governance must operate above the implementation layer to remain durable as tooling evolves (National Institute of Standards and Technology, 2020).

The framework operates entirely through structured interaction. Authoritative Markdown defines structure, the OIL_CONTRACT defines governance constraints, and the INVOKER declares how execution may occur. Within this structure, Probabilistic Reasoning is permitted only inside explicitly declared Execution Processes, each of which defines how inputs are bound, how outputs are produced, and how results may influence subsequent interaction.

Importantly, the framework does not automate judgment or decision-making. All Authority remains with the ROLE_USER. The ROLE_ASSISTANT functions as a constrained execution engine whose outputs are non-authoritative until explicitly accepted. This separation preserves human accountability while still leveraging the generative and reasoning capabilities of modern language models.

In practice, the framework functions as an operational discipline rather than a software product. It is designed to be applied consistently across use cases such as security analysis, configuration comparison, compliance review, and structured reporting, where uncontrolled uncertainty would otherwise undermine trust and repeatability.

Core design objectives¶

The Entropy-Gated AI Framework is built around explicit design objectives derived from established systems engineering, configuration management, and governance-oriented security practices (National Institute of Standards and Technology, 2011; National Institute of Standards and Technology, 2020).

The primary objective is regulation of Interaction-Level Entropy. Unchecked reuse of Probabilistic Reasoning outputs leads to drift, inconsistency, and loss of auditability. The framework constrains when probabilistic reasoning may occur and how its results may influence State Promotion.

A second objective is preservation of explicit Authority. All decisions affecting Execution, Acceptance, Pruning, or retries must be performed by the ROLE_USER. The ROLE_ASSISTANT is structurally prevented from assuming intent, filling gaps, or performing implicit State Promotion.

The framework also prioritizes clean retries and disciplined failure handling. By enforcing explicit Pruning and bounded Execution Processes, it ensures that rejected reasoning does not contaminate subsequent attempts. This mirrors best practices in configuration drift management, where failed changes are rolled back rather than layered (Thiyagarajan et al., 2024).

Finally, the framework is designed to maintain auditability across long-running sessions. Structural Authority through Markdown, explicit execution declarations, and governed lifecycles allow interactions to be reviewed after the fact with clear attribution of decisions and outcomes. This makes the framework suitable for professional environments where accountability and traceability are required.

Hub and Lab model¶

The Entropy-Gated AI Framework separates planning and execution into two distinct conceptual spaces: the Hub and the Lab. This separation mirrors long-standing practices in systems engineering, where design, approval, and execution are not conflated.

The Hub functions as a control and coordination space. It is used for planning work, discussing intent, reviewing results, drafting Execution Transmissions, and deciding whether governed Execution is required. Activity in the Hub is unconstrained by the framework and does not enforce Governance. No Authority is assumed or granted by virtue of discussion alone.

By contrast, Labs are scoped execution environments where governance is enforced. A Lab exists only when an OIL_CONTRACT is sealed and an INVOKER is acknowledged. Within a Lab, execution is bounded, authority is explicit, and Probabilistic Reasoning is regulated through Entropy Gating.

This separation prevents planning artifacts, speculative reasoning, or Pruned outputs from contaminating execution. It also enables disciplined review and decision-making prior to committing work to a governed process. Similar separation between planning and execution is emphasized in configuration management guidance, where uncontrolled change is a primary source of drift and failure (National Institute of Standards and Technology, 2011).

Entropy-gated execution and process control¶

At the core of the Entropy-Gated AI Framework is Entropy Gating, a mechanism that regulates when and how Probabilistic Reasoning may influence outputs or accepted state.

Entropy gating is enforced through explicit declaration of an Execution Process via the INVOKER. Each execution process defines the permitted interaction pattern, including whether execution is SINGLE_PASS, MULTI_TURN, or INTERACTIVE, how inputs are bound, and how outputs may influence subsequent interaction.

Rather than allowing probabilistic outputs to flow freely across a conversation, the framework requires each Execution to occur within a bounded process with clear entry and exit conditions. This approach aligns with contemporary guidance on AI guardrails, which emphasizes containment, verification, and controlled escalation over unrestricted autonomy (McKinsey & Company, 2024).

Rejected or incorrect outputs do not implicitly persist. They are explicitly removed through Pruning, which requires the ROLE_USER to retry from a clean instruction. This prevents Interaction-Level Entropy from compounding across retries and ensures that failed reasoning does not influence later outcomes.

By combining explicit process declaration, Authority enforcement, and disciplined retries, entropy-gated execution enables Quasi-Deterministic behavior suitable for audit and review without attempting to alter the inherently probabilistic nature of the model itself. The theoretical grounding for this approach draws on foundational information theory and modern configuration governance practices (Shannon, 1948; Thiyagarajan et al., 2024).

How to get started¶

Getting started with the Entropy-Gated AI Framework requires understanding its governance model before attempting execution. The framework is intentionally minimal in mechanism but strict in operation.

A disciplined onboarding sequence is recommended:

- Review the Quick Start to understand the basic execution flow

- Study Entropy and Language Models to understand Interaction-Level Entropy

- Use the Hub to plan execution and draft artifacts such as Execution Transmissions

- Perform governed work only within a Lab under a sealed OIL_CONTRACT

Initial executions SHOULD favor SINGLE_PASS processes to reinforce discipline and repeatability. As familiarity increases, more complex process modes MAY be introduced deliberately and explicitly.

Correct usage is reinforced through practice. The framework does not prevent misuse; it exposes misuse through Refusal, failure, and explicit operator responsibility. This reflects established principles in secure system operation, where tooling supports but does not replace human governance (National Institute of Standards and Technology, 2020).

Repository contents¶

The repository is structured to preserve clear Authority boundaries between governance specification, execution control, planning artifacts, and explanatory documentation. Each component has a defined role and an explicit scope.

At the core of the repository are the canonical specifications:

-

OIL_CONTRACT

The Orchestration Initialiaztion Loaderor OIL Contract defines authority, constraints, prohibitions, structural rules, and continuity enforcement. Once sealed, it governs Execution behavior within a Lab. The contract is normative and authoritative.

See the OIL_CONTRACT specification. -

INVOKER

The INVOKER is the canonical execution declaration that arms an Execution Process under a sealed OIL_CONTRACT. It defines the active Process Mode, entropy gating rules, and execution permissions without performing execution itself.

See the INVOKER specification.

Execution planning and task definition are expressed as an explicit, separate artifact:

- E_T.md

E_T.md is the canonical Execution Transmission artifact. It defines execution intent, scope, constraints, output requirements, and reporting format in a form that is readable, copyable, and auditable.

E_T.md is planned and generated in the Hub and is treated as a task-definition artifact, not a governance contract and not an execution trigger. It becomes operational only when the ROLE_USER binds it during the execution instruction phase. This preserves the Acceptance Boundary and prevents implicit State Promotion.

Supporting documentation:

-

Glossary

Normative definitions of all framework terms. -

References

External research, standards, and guidance used for contextual grounding. -

Quick Start

Minimal procedural walkthrough for first use.

Demonstrative examples are provided in Use Cases. These illustrate governed execution outcomes without embedding assumptions into the core specifications.

Status¶

The Entropy-Gated AI Framework is stable at the specification and governance level.

The core contracts, including the OIL_CONTRACT, INVOKER, and Execution Transmission model, are intentionally minimal, explicit, and resistant to change. This stability reflects established guidance in systems governance and configuration control, where core control mechanisms are designed to remain fixed while implementations and examples evolve (National Institute of Standards and Technology, 2011; National Institute of Standards and Technology, 2020).

Active development focuses on:

- documentation clarity and operator guidance

- illustrative Use Cases and proof-of-concept executions

- visual explanations and instructional media

- tooling that supports, but does not replace, human Authority

The framework does not pursue feature growth for its own sake. Changes that would weaken authority boundaries, relax Acceptance discipline, or permit implicit State Promotion are considered regressions rather than improvements.

This approach aligns with research emphasizing that governance mechanisms must remain stable to preserve auditability and trust in AI-assisted systems (Bajpai, 2024; Thiyagarajan et al., 2024).